March 2007 news archives

March 30, 2007

Anatomy of a server crash gone horribly wrong

On February 20th, a routine upgrade of the server software using the Debian package manager failed horribly. The most central software library of them all, the GNU C Library, failed to upgrade correctly leaving the system largely non-functional. And to top it off, the Windows laptop followed suit with a prompt blue screen of death, thus killing the last open shell to the server. The web servers and databases were still happily up and running thanks to being isolated in their own self-containing jail environments, but most everything else, including logging in, was dead in the water. A reboot gave the expected result when the system failed to boot. Caputt.

I forked up the $50 for a 24 hour rental of a remote console with various rescue tools and operating system reloaders to be able to make a last full backup before reinstalling. While at it, I figured I would go ahead and upgrade to Debian Etch, since it is just around the corner to become the new stable release. But the server configuration, while flexible and hard drive failure resistant, proved to be a nightmare in this scenario where data had to be rescued off an unbootable system; the server was configured to mirror the data on two identical hard drives (RAID level 1) with most of the data residing on LVM volumes formatted with the ReiserFS filesystem. With a hardware card taking care of the mirroring, the two drives still transparently appeared as a single drive to the rescue disks. Alas, the rescue disks proved not have the software libraries needed to mount the LVM volumes AND read the ReiserFS format. Goodbye quick backup, goodbye.

Plan B was to have the RAID array broken up by removing the hardware card to allow a fresh install of the operating system with the requisite tools on one drive to access the original data on the second drive. This took a bit of constructive hard drive switching and bootloader configuration as the boot information and drive partition tables turned out to be stored on the hardware RAID card itself making the drive information unaccessible without it. In the end, fed up with facing yet another several hour wait to have a hard drive removed, I bravely installed the new operating system while having the original drive plugged in as secondary master with the feared result that I whacked up the partition table for the last original drive in the process. This was four days after the server crashed, two of which was spent waiting for the remote console to actually be hooked up and then determined not to work in my server rack due to a local issue necessitating the temporary relocation of my server. At this time, I had arrived at the tranquil conclusion that losing the last few months of e-mail and a few scripts would not be the end of the world. And it wasn’t. Alea iacta est and whatnot, I still had a reasonable fresh full backup of all the web data complemented by Google’s cache so things were reasonably dandy.

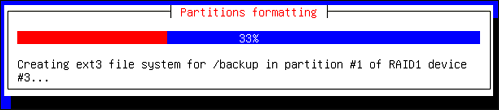

Armed with a new sense of how to reconfigure the server to better take this kind of disaster scenario into account, I had the techs remove the hardware RAID card for good and set out to install Debian Etch with software RAID. Things were not smooth sailing now either, as the installer first refused to install Etch due to a hard to track down Debootstrap Error, the server chassis needed to be replaced due to a faulty keyboard port and the techs made several mistakes (it did net me free access to the remote console for a few days and a free 512MB RAM addition for a current total of 2GB). In the end, it took an incredible eight days to go come to the point where I could actually do a 30 minute fresh install of the system and have the server moved back to its regular slot.

With this much downtime already behind me and no paying clients on the server, I took the time to upgrade all software, to rewrite the jailing scripts where needed and, more than anything else, to hack on a comeback to be reckoned with for my virtual powerlifting project (soon to be relaunched, tighten your socks!)… basically doing things that would have been needed to be done soon anyway. Apologies for the downtime, stay tuned for the pay off.

The final bits and pieces have now been restored here at tsampa.org, shoot me an e-mail if something is not working like it used to.

About

About